Why start with one house?

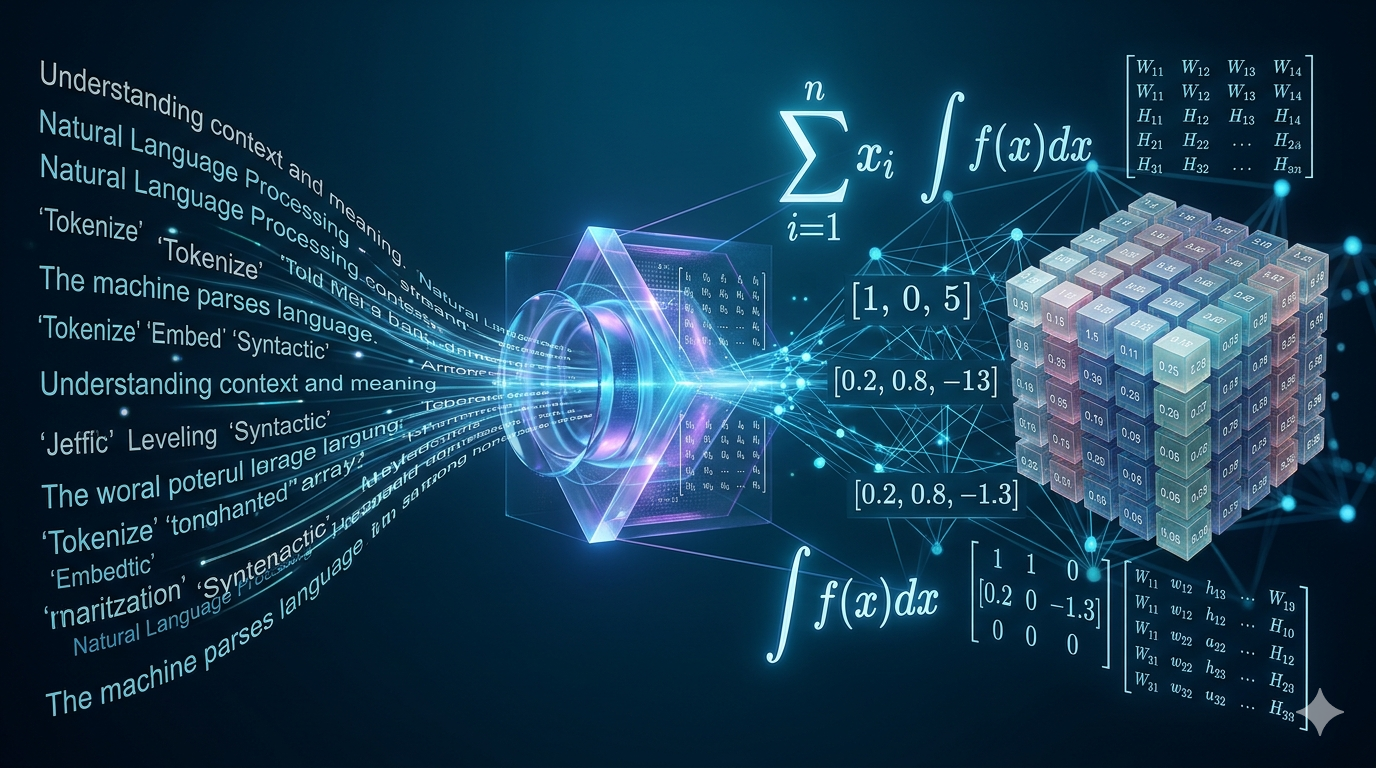

Artificial intelligence begins with data, but data does not enter a model as a vague idea. It must be represented mathematically.

A house, for example, is a real-world object. It has a price, a location, an area, a number of rooms, an age, a height, a neighborhood, and many other properties. A machine-learning model cannot directly understand the sentence:

This is a two-floor house in a good location with a price of 9.0.

The model needs numbers.

So the first question of applied mathematics for AI is:

How do we turn a real object into mathematical objects that a model can compute with?

We begin with the simplest possible case: one number.

A single data point as a scalar

Suppose we only know one thing about a house:

$$ \text{price} = 9.0 $$This number may mean 9.0 million rupees, 9.0 hundred-thousand dollars, or any other chosen unit. The unit is not the main point yet. The mathematical point is that we have one number.

We can name this number:

$$ x = 9.0 $$Here, \(x\) is a scalar.

A scalar is a single number. It has magnitude, but it does not have multiple components.

Examples of scalars are:

$$ x = 9.0 $$$$ a = 1200 $$$$ r = 0.05 $$$$ n = 100 $$In machine learning, scalars appear everywhere:

- one house price,

- one temperature value,

- one loss value,

- one learning rate,

- one probability,

- one model output for a single regression task.

If we write:

$$ x = 9.0 $$then \(x\) is a scalar data point.

But one number is rarely enough to describe a house.

From one number to many features

A real house is not described only by price. Suppose we observe the following information:

| Property | Value |

|---|---|

| Price | 9.0 |

| Area | 1200 square feet |

| Bedrooms | 3 |

| Floors | 2 |

| Age | 10 years |

| Distance from city center | 3.2 km |

Now the house is no longer represented by one number. It is represented by several numbers.

We may collect these numbers into one ordered list:

$$ [9.0,\;1200,\;3,\;2,\;10,\;3.2] $$This list is the beginning of a vector representation.

Each number describes one property of the house. In machine learning, these properties are usually called features.

So we may write:

$$ x = \begin{bmatrix} 9.0 \\ 1200 \\ 3 \\ 2 \\ 10 \\ 3.2 \end{bmatrix} $$This object is no longer a scalar. It is a vector.

It has six components, so we say:

$$ x \in \mathbb{R}^6 $$This means:

\(x\) is a vector with 6 real-valued components.

Numerical example: one house

Let the features be ordered as:

$$ \text{features} = [ \text{price}, \text{area}, \text{bedrooms}, \text{floors}, \text{age}, \text{distance} ] $$For one house:

$$ x = \begin{bmatrix} 9.0 \\ 1200 \\ 3 \\ 2 \\ 10 \\ 3.2 \end{bmatrix} $$The first component is the price:

$$ x_1 = 9.0 $$The second component is the area:

$$ x_2 = 1200 $$The third component is the number of bedrooms:

$$ x_3 = 3 $$The fourth component is the number of floors:

$$ x_4 = 2 $$The fifth component is the age:

$$ x_5 = 10 $$The sixth component is the distance from the city center:

$$ x_6 = 3.2 $$So the vector is not just a list of numbers. It is a structured representation of one house.

Price as an input versus price as a target

There is an important machine-learning distinction here.

If we are simply describing a house, we may include price inside \(x\):

$$ x = \begin{bmatrix} 9.0 \\ 1200 \\ 3 \\ 2 \\ 10 \\ 3.2 \end{bmatrix} $$But if our task is to predict house price, then price is usually not placed inside the input vector. Instead, price becomes the target value.

Then we write:

$$ x = \begin{bmatrix} 1200 \\ 3 \\ 2 \\ 10 \\ 3.2 \end{bmatrix} $$and

$$ y = 9.0 $$Here:

- \(x\) is the input vector,

- \(y\) is the target output,

- the model learns a function that maps \(x\) to \(y\).

That is:

$$ f(x) \approx y $$For house-price prediction:

$$ f \left( \begin{bmatrix} 1200 \\ 3 \\ 2 \\ 10 \\ 3.2 \end{bmatrix} \right) \approx 9.0 $$Why words must become numbers

Suppose we also know the location:

| Property | Value |

|---|---|

| Location | North |

The word "North" cannot directly enter most mathematical models. We need to encode it numerically.

A poor encoding would be:

$$ \text{North}=1,\qquad \text{South}=2,\qquad \text{East}=3 $$This may accidentally tell the model that East is somehow greater than South, and South is greater than North. But neighborhoods usually do not have such a natural numerical order.

A better simple encoding is one-hot encoding.

Suppose the possible locations are:

$$ \{\text{North},\text{South},\text{East}\} $$Then:

$$ \text{North} = \begin{bmatrix} 1 \\ 0 \\ 0 \end{bmatrix} $$$$ \text{South} = \begin{bmatrix} 0 \\ 1 \\ 0 \end{bmatrix} $$$$ \text{East} = \begin{bmatrix} 0 \\ 0 \\ 1 \end{bmatrix} $$Now a house in the North location may be represented as:

$$ x = \begin{bmatrix} 1200 \\ 3 \\ 2 \\ 10 \\ 3.2 \\ 1 \\ 0 \\ 0 \end{bmatrix} $$This vector has 8 components:

$$ x \in \mathbb{R}^8 $$The first five components are numerical house properties. The last three components encode the location.

A single row of data

In a spreadsheet or dataset, one house is often stored as one row.

| Area | Bedrooms | Floors | Age | Distance | North | South | East | Price |

|---|---|---|---|---|---|---|---|---|

| 1200 | 3 | 2 | 10 | 3.2 | 1 | 0 | 0 | 9.0 |

This row contains one training example.

If the goal is to predict price, then the input features are:

$$ x = \begin{bmatrix} 1200 \\ 3 \\ 2 \\ 10 \\ 3.2 \\ 1 \\ 0 \\ 0 \end{bmatrix} $$and the target is:

$$ y = 9.0 $$What one row means

One row usually means:

one object, one observation, one sample, or one data point.

In house-price prediction:

- one row = one house,

- one column = one feature,

- one target column = the value we want to predict.

In image classification:

- one row may represent one image after flattening,

- columns may represent pixel values,

- the target may represent the class label.

In natural language processing:

- one row may represent one sentence,

- columns may represent token IDs or embedding values,

- the target may represent sentiment, next word, or class label.

Features, dimensions, and units

If a vector has \(d\) features, we write:

$$ x \in \mathbb{R}^d $$The number \(d\) is called the dimension of the vector.

For example:

$$ x = \begin{bmatrix} 1200 \\ 3 \\ 2 \\ 10 \\ 3.2 \end{bmatrix} \in \mathbb{R}^5 $$Here:

$$ d = 5 $$The vector has five components.

However, each component may have a different unit:

| Component | Meaning | Unit |

|---|---|---|

| \(x_1\) | Area | square feet |

| \(x_2\) | Bedrooms | count |

| \(x_3\) | Floors | count |

| \(x_4\) | Age | years |

| \(x_5\) | Distance | kilometers |

This matters because raw numerical values can have very different scales.

For example, area may be around 1200, while bedrooms may be around 3. If we compute distances or dot products directly, the area feature may dominate simply because its numerical scale is larger.

Row vectors and column vectors

The same numbers can be written horizontally or vertically.

Horizontally:

$$ x = \begin{bmatrix} 1200 & 3 & 2 & 10 & 3.2 \end{bmatrix} $$Vertically:

$$ x = \begin{bmatrix} 1200 \\ 3 \\ 2 \\ 10 \\ 3.2 \end{bmatrix} $$These look similar, but mathematically they have different shapes.

Row vector

A row vector has one row and many columns.

For example:

$$ x_{\text{row}} = \begin{bmatrix} 1200 & 3 & 2 & 10 & 3.2 \end{bmatrix} $$This has shape:

$$ 1 \times 5 $$So:

$$ x_{\text{row}} \in \mathbb{R}^{1 \times 5} $$A row vector is useful when we think of a data point as one row in a dataset.

Column vector

A column vector has many rows and one column.

For example:

$$ x_{\text{col}} = \begin{bmatrix} 1200 \\ 3 \\ 2 \\ 10 \\ 3.2 \end{bmatrix} $$This has shape:

$$ 5 \times 1 $$So:

$$ x_{\text{col}} \in \mathbb{R}^{5 \times 1} $$A column vector is common in linear algebra because many formulas are written using column vectors.

Same numbers, different mathematical shape

The row vector is:

$$ x_{\text{row}} = \begin{bmatrix} 1200 & 3 & 2 & 10 & 3.2 \end{bmatrix} \in \mathbb{R}^{1 \times 5} $$The column vector is:

$$ x_{\text{col}} = \begin{bmatrix} 1200 \\ 3 \\ 2 \\ 10 \\ 3.2 \end{bmatrix} \in \mathbb{R}^{5 \times 1} $$They contain the same values, but they are not the same matrix-shaped object.

Their shapes are different:

$$ 1 \times 5 \neq 5 \times 1 $$This difference matters when we multiply vectors and matrices.

For example, suppose:

$$ a = \begin{bmatrix} 2 & 3 & 4 \end{bmatrix} $$and

$$ b = \begin{bmatrix} 5 \\ 6 \\ 7 \end{bmatrix} $$Then we multiply \(a\) and \(b\) in that order. Writing \(ab\) means the usual matrix product: a \(1 \times 3\) row times a \(3 \times 1\) column, so the result is a single number (a scalar).

How the entries combine: the first component of \(a\) multiplies the first component of \(b\), the second multiplies the second, the third multiplies the third. Those three products are added — that sum is the value of \(ab\) (also called the dot product of the row and the column).

$$ ab = \begin{bmatrix} 2 & 3 & 4 \end{bmatrix} \begin{bmatrix} 5 \\ 6 \\ 7 \end{bmatrix} $$So:

$$ ab = 2(5) + 3(6) + 4(7) $$$$ ab = 10 + 18 + 28 = 56 $$So:

$$ ab = 56 $$This is a scalar.

But if we reverse the order and multiply \(b\) by \(a\) instead — a \(3 \times 1\) column times a \(1 \times 3\) row — you get a \(3 \times 3\) matrix (each entry is one component of \(b\) times one component of \(a\); we spell that out below).

$$ ba = \begin{bmatrix} 5 \\ 6 \\ 7 \end{bmatrix} \begin{bmatrix} 2 & 3 & 4 \end{bmatrix} $$then the result is:

$$ ba = \begin{bmatrix} 10 & 15 & 20 \\ 12 & 18 & 24 \\ 14 & 21 & 28 \end{bmatrix} $$This is a \(3 \times 3\) matrix.

So:

$$ ab \neq ba $$The order and shape matter.

Transpose

The transpose changes rows into columns and columns into rows.

The transpose of an object is written using the symbol:

$$ ^\top $$If \(A\) is a matrix, then its transpose is:

$$ A^\top $$Scalar transpose

A scalar has no row or column direction.

If:

$$ x = 9.0 $$then:

$$ x^\top = x $$So:

$$ 9.0^\top = 9.0 $$The transpose of a scalar is the same scalar.

Row-to-column transpose

Let:

$$ x_{\text{row}} = \begin{bmatrix} 1200 & 3 & 2 & 10 & 3.2 \end{bmatrix} $$Then:

$$ x_{\text{row}}^\top = \begin{bmatrix} 1200 \\ 3 \\ 2 \\ 10 \\ 3.2 \end{bmatrix} $$So the transpose changes a row vector into a column vector.

Shape-wise:

$$ x_{\text{row}} \in \mathbb{R}^{1 \times 5} $$and:

$$ x_{\text{row}}^\top \in \mathbb{R}^{5 \times 1} $$Column-to-row transpose

Similarly, if:

$$ x_{\text{col}} = \begin{bmatrix} 1200 \\ 3 \\ 2 \\ 10 \\ 3.2 \end{bmatrix} $$then:

$$ x_{\text{col}}^\top = \begin{bmatrix} 1200 & 3 & 2 & 10 & 3.2 \end{bmatrix} $$Shape-wise:

$$ x_{\text{col}} \in \mathbb{R}^{5 \times 1} $$and:

$$ x_{\text{col}}^\top \in \mathbb{R}^{1 \times 5} $$Matrix transpose

What is a matrix (first pass)? For now, think of a matrix in whichever picture helps: two (or more) rows written one under the other, or two (or more) columns placed side by side — equivalently, row vectors stacked vertically or column vectors lined up horizontally. We are about to see how transpose swaps that row/column view. A fuller story — how we read off entries, shapes like \(n \times d\), and how matrices act in models — comes when we build the dataset matrix in the next major section, From one house to a dataset matrix.

Suppose we have a matrix:

$$ A = \begin{bmatrix} 1 & 2 & 3 \\ 4 & 5 & 6 \end{bmatrix} $$This matrix has 2 rows and 3 columns:

$$ A \in \mathbb{R}^{2 \times 3} $$The transpose is:

$$ A^\top = \begin{bmatrix} 1 & 4 \\ 2 & 5 \\ 3 & 6 \end{bmatrix} $$Now the shape is:

$$ A^\top \in \mathbb{R}^{3 \times 2} $$The first row of \(A\) becomes the first column of \(A^\top\). The second row of \(A\) becomes the second column of \(A^\top\).

In general, if:

$$ A \in \mathbb{R}^{m \times n} $$then:

$$ A^\top \in \mathbb{R}^{n \times m} $$Code warning: 1-D arrays are not row or column vectors

In mathematics, we distinguish between:

$$ \mathbb{R}^{1 \times d} $$and:

$$ \mathbb{R}^{d \times 1} $$But in NumPy or PyTorch, a one-dimensional array may have shape:

(d,)

This is not the same as:

(1, d)

and it is not the same as:

(d, 1)

For example:

import numpy as np

x = np.array([1200, 3, 2, 10, 3.2])

print(x.shape)

Output:

(5,)

This is a 1-D array.

If we write:

print(x.T.shape)

the shape is still:

(5,)

The transpose did not turn it into a column vector, because the array has only one axis.

To create a row vector:

x_row = x.reshape(1, 5)

print(x_row.shape)

Output:

(1, 5)

To create a column vector:

x_col = x.reshape(5, 1)

print(x_col.shape)

Output:

(5, 1)

Now transposition behaves as expected:

print(x_row.T.shape)

Output:

(5, 1)

and:

print(x_col.T.shape)

Output:

(1, 5)

From one house to a dataset matrix

Machine learning rarely uses only one house. Usually, we have many houses.

Suppose we have three houses:

| House | Area | Bedrooms | Floors | Age | Distance | North | South | Price |

|---|---|---|---|---|---|---|---|---|

| 1 | 1200 | 3 | 2 | 10 | 3.2 | 1 | 0 | 9.0 |

| 2 | 850 | 2 | 1 | 18 | 8.5 | 0 | 1 | 6.5 |

| 3 | 1600 | 4 | 2 | 5 | 1.1 | 1 | 0 | 12.0 |

If price is the target, then the input matrix is:

$$ X = \begin{bmatrix} 1200 & 3 & 2 & 10 & 3.2 & 1 & 0 \\ 850 & 2 & 1 & 18 & 8.5 & 0 & 1 \\ 1600 & 4 & 2 & 5 & 1.1 & 1 & 0 \end{bmatrix} $$The target vector is:

$$ y = \begin{bmatrix} 9.0 \\ 6.5 \\ 12.0 \end{bmatrix} $$Here:

$$ X \in \mathbb{R}^{3 \times 7} $$and:

$$ y \in \mathbb{R}^{3} $$or, if written explicitly as a column:

$$ y \in \mathbb{R}^{3 \times 1} $$Rows as examples

Each row of \(X\) is one house.

The first row is:

$$ \begin{bmatrix} 1200 & 3 & 2 & 10 & 3.2 & 1 & 0 \end{bmatrix} $$This represents House 1.

The second row is:

$$ \begin{bmatrix} 850 & 2 & 1 & 18 & 8.5 & 0 & 1 \end{bmatrix} $$This represents House 2.

The third row is:

$$ \begin{bmatrix} 1600 & 4 & 2 & 5 & 1.1 & 1 & 0 \end{bmatrix} $$This represents House 3.

So the number of rows is the number of data points:

$$ n = 3 $$Columns as features

Each column of \(X\) is one feature.

The first column is area:

$$ \begin{bmatrix} 1200 \\ 850 \\ 1600 \end{bmatrix} $$The second column is bedrooms:

$$ \begin{bmatrix} 3 \\ 2 \\ 4 \end{bmatrix} $$The fifth column is distance:

$$ \begin{bmatrix} 3.2 \\ 8.5 \\ 1.1 \end{bmatrix} $$So the number of columns is the number of input features:

$$ d = 7 $$The design matrix

In machine learning, the input matrix is often called the design matrix.

We usually write:

$$ X \in \mathbb{R}^{n \times d} $$where:

- \(n\) is the number of examples,

- \(d\) is the number of features.

The \(i\)-th data point is often written as:

$$ x_i \in \mathbb{R}^d $$If \(x_i\) is treated as a column vector, then the dataset matrix is written as:

$$ X = \begin{bmatrix} x_1^\top \\ x_2^\top \\ \vdots \\ x_n^\top \end{bmatrix} $$This notation is very important.

It says:

- each \(x_i\) is naturally a column vector,

- each row of \(X\) is \(x_i^\top\),

- the full dataset stacks transposed data points row by row.

So:

$$ x_i \in \mathbb{R}^{d} $$but:

$$ x_i^\top \in \mathbb{R}^{1 \times d} $$and:

$$ X \in \mathbb{R}^{n \times d} $$What is a vector mathematically?

A vector is not merely a list of numbers. A vector is an object that can be added to other vectors and multiplied by scalars while staying inside the same space.

For example, if:

$$ u = \begin{bmatrix} 1 \\ 2 \\ 3 \end{bmatrix} $$and:

$$ v = \begin{bmatrix} 4 \\ 5 \\ 6 \end{bmatrix} $$then:

$$ u + v = \begin{bmatrix} 1+4 \\ 2+5 \\ 3+6 \end{bmatrix} = \begin{bmatrix} 5 \\ 7 \\ 9 \end{bmatrix} $$This result is still a vector in \(\mathbb{R}^3\).

Vector addition

For two vectors:

$$ u = \begin{bmatrix} u_1 \\ u_2 \\ \vdots \\ u_d \end{bmatrix} $$and:

$$ v = \begin{bmatrix} v_1 \\ v_2 \\ \vdots \\ v_d \end{bmatrix} $$their sum is:

$$ u+v = \begin{bmatrix} u_1+v_1 \\ u_2+v_2 \\ \vdots \\ u_d+v_d \end{bmatrix} $$Vectors can be added only when their dimensions match.

For example:

$$ \begin{bmatrix} 1 \\ 2 \\ 3 \end{bmatrix} + \begin{bmatrix} 4 \\ 5 \\ 6 \end{bmatrix} = \begin{bmatrix} 5 \\ 7 \\ 9 \end{bmatrix} $$But this is not valid:

$$ \begin{bmatrix} 1 \\ 2 \\ 3 \end{bmatrix} + \begin{bmatrix} 4 \\ 5 \end{bmatrix} $$because the first vector is in \(\mathbb{R}^3\), while the second is in \(\mathbb{R}^2\).

Scalar multiplication

If:

$$ u = \begin{bmatrix} 1 \\ 2 \\ 3 \end{bmatrix} $$and:

$$ \alpha = 2 $$then:

$$ \alpha u = 2 \begin{bmatrix} 1 \\ 2 \\ 3 \end{bmatrix} = \begin{bmatrix} 2 \\ 4 \\ 6 \end{bmatrix} $$In general:

$$ \alpha u = \begin{bmatrix} \alpha u_1 \\ \alpha u_2 \\ \vdots \\ \alpha u_d \end{bmatrix} $$This operation scales the vector.

In feature space, scaling a vector changes its distance from the origin but not its direction if \(\alpha > 0\).

Norm, distance, and similarity

The length of a vector is called its norm.

The most common norm is the Euclidean norm:

$$ |x|_2 = \sqrt{x_1^2+x_2^2+\cdots+x_d^2} $$For example:

$$ x = \begin{bmatrix} 3 \\ 4 \end{bmatrix} $$Then:

$$ |x|_2 = \sqrt{3^2+4^2} $$$$ |x|_2 = \sqrt{9+16} $$$$ |x|_2 = \sqrt{25} $$$$ |x|_2 = 5 $$This is the same geometry as the Pythagorean theorem.

Distance between two vectors is computed by subtracting them first:

$$ \text{distance}(u,v) = |u-v|_2 $$Suppose:

$$ u = \begin{bmatrix} 1200 \\ 3 \end{bmatrix} $$and:

$$ v = \begin{bmatrix} 1500 \\ 4 \end{bmatrix} $$Then:

$$ u-v = \begin{bmatrix} -300 \\ -1 \end{bmatrix} $$So:

$$ |u-v|_2 = \sqrt{(-300)^2+(-1)^2} $$$$ |u-v|_2 = \sqrt{90000+1} $$$$ |u-v|_2 \approx 300.0017 $$The distance is dominated by the area difference because area has a much larger numerical scale than bedrooms.

This is why raw feature distances can be misleading.

If we standardize the features, the comparison becomes more balanced.

For a feature \(x_j\), standardization often uses:

$$ z_j = \frac{x_j-\mu_j}{\sigma_j} $$where:

- \(\mu_j\) is the mean of feature \(j\),

- \(\sigma_j\) is the standard deviation of feature \(j\).

For example, suppose:

$$ \mu_{\text{area}} = 1200,\qquad \sigma_{\text{area}} = 300 $$and:

$$ \mu_{\text{bedrooms}} = 3,\qquad \sigma_{\text{bedrooms}} = 1 $$For a house with:

$$ \text{area}=1500,\qquad \text{bedrooms}=4 $$we get:

$$ z_{\text{area}} = \frac{1500-1200}{300}=1 $$and:

$$ z_{\text{bedrooms}} = \frac{4-3}{1}=1 $$So the standardized vector is:

$$ z = \begin{bmatrix} 1 \\ 1 \end{bmatrix} $$Now both features are measured in comparable standardized units.

Linear combinations

A linear combination of vectors is formed by multiplying vectors by scalars and adding them.

If:

$$ u = \begin{bmatrix} 1 \\ 0 \end{bmatrix} $$and:

$$ v = \begin{bmatrix} 0 \\ 1 \end{bmatrix} $$then:

$$ 3u + 4v = 3 \begin{bmatrix} 1 \\ 0 \end{bmatrix} + 4 \begin{bmatrix} 0 \\ 1 \end{bmatrix} $$$$ 3u + 4v = \begin{bmatrix} 3 \\ 0 \end{bmatrix} + \begin{bmatrix} 0 \\ 4 \end{bmatrix} $$$$ 3u + 4v = \begin{bmatrix} 3 \\ 4 \end{bmatrix} $$Machine-learning models are full of linear combinations.

A neuron computes a weighted combination of input features.

A linear regression model computes a weighted combination of input features.

A matrix multiplication computes many linear combinations at once.

The first machine-learning model: a weighted sum

Now suppose we want to predict house price from features.

Use four features:

$$ x = \begin{bmatrix} 1200 \\ 3 \\ 10 \\ 3.2 \end{bmatrix} $$where:

- \(x_1=1200\) is area,

- \(x_2=3\) is bedrooms,

- \(x_3=10\) is age,

- \(x_4=3.2\) is distance.

Let the model weights be:

$$ w = \begin{bmatrix} 0.003 \\ 0.8 \\ -0.02 \\ -0.15 \end{bmatrix} $$and let the bias be:

$$ b = 1.5 $$A simple linear model predicts:

$$ \hat{y} = x^\top w + b $$Dot product

The dot product is:

$$ x^\top w = \begin{bmatrix} 1200 & 3 & 10 & 3.2 \end{bmatrix} \begin{bmatrix} 0.003 \\ 0.8 \\ -0.02 \\ -0.15 \end{bmatrix} $$Multiply matching components and add:

$$ x^\top w = 1200(0.003) + 3(0.8) + 10(-0.02) + 3.2(-0.15) $$Now compute each part:

$$ 1200(0.003)=3.6 $$$$ 3(0.8)=2.4 $$$$ 10(-0.02)=-0.2 $$$$ 3.2(-0.15)=-0.48 $$So:

$$ x^\top w = 3.6 + 2.4 - 0.2 - 0.48 $$$$ x^\top w = 5.32 $$Now add the bias:

$$ \hat{y} = 5.32 + 1.5 $$$$ \hat{y} = 6.82 $$So the model predicts:

$$ \hat{y} = 6.82 $$If the true price is:

$$ y = 9.0 $$then the model underpredicts the price.

Prediction

The prediction formula:

$$ \hat{y} = x^\top w + b $$has a simple interpretation.

Each feature contributes something:

| Feature | Value | Weight | Contribution |

|---|---|---|---|

| Area | 1200 | 0.003 | 3.6 |

| Bedrooms | 3 | 0.8 | 2.4 |

| Age | 10 | -0.02 | -0.2 |

| Distance | 3.2 | -0.15 | -0.48 |

The bias contributes:

$$ b = 1.5 $$Total:

$$ 3.6 + 2.4 - 0.2 - 0.48 + 1.5 = 6.82 $$The positive weights increase the prediction. The negative weights decrease the prediction.

In this toy example:

- larger area increases predicted price,

- more bedrooms increase predicted price,

- older age decreases predicted price,

- larger distance from city center decreases predicted price.

Error and loss

The prediction error is:

$$ e = \hat{y} - y $$Using our values:

$$ e = 6.82 - 9.0 $$$$ e = -2.18 $$The model prediction is 2.18 units below the true price.

A common loss for regression is squared error:

$$ L = (\hat{y}-y)^2 $$So:

$$ L = (-2.18)^2 $$$$ L = 4.7524 $$The goal of training is to adjust \(w\) and \(b\) so that the loss becomes smaller across the training dataset.

Batch prediction

Now suppose we have three houses and four features:

$$ X = \begin{bmatrix} 1200 & 3 & 10 & 3.2 \\ 850 & 2 & 18 & 8.5 \\ 1600 & 4 & 5 & 1.1 \end{bmatrix} $$The weight vector is:

$$ w = \begin{bmatrix} 0.003 \\ 0.8 \\ -0.02 \\ -0.15 \end{bmatrix} $$The bias is:

$$ b = 1.5 $$The batch prediction is:

$$ \hat{y} = Xw + b $$Strictly, \(b\) is added to each row result.

First compute:

$$ Xw = \begin{bmatrix} 1200 & 3 & 10 & 3.2 \\ 850 & 2 & 18 & 8.5 \\ 1600 & 4 & 5 & 1.1 \end{bmatrix} \begin{bmatrix} 0.003 \\ 0.8 \\ -0.02 \\ -0.15 \end{bmatrix} $$For House 1:

$$ 1200(0.003)+3(0.8)+10(-0.02)+3.2(-0.15)=5.32 $$For House 2:

$$ 850(0.003)+2(0.8)+18(-0.02)+8.5(-0.15) $$$$ =2.55+1.6-0.36-1.275 $$$$ =2.515 $$For House 3:

$$ 1600(0.003)+4(0.8)+5(-0.02)+1.1(-0.15) $$$$ =4.8+3.2-0.1-0.165 $$$$ =7.735 $$So:

$$ Xw = \begin{bmatrix} 5.32 \\ 2.515 \\ 7.735 \end{bmatrix} $$Now add \(b=1.5\):

$$ \hat{y} = \begin{bmatrix} 5.32 \\ 2.515 \\ 7.735 \end{bmatrix} + \begin{bmatrix} 1.5 \\ 1.5 \\ 1.5 \end{bmatrix} $$Therefore:

$$ \hat{y} = \begin{bmatrix} 6.82 \\ 4.015 \\ 9.235 \end{bmatrix} $$This is one prediction for each house.

Shape-wise:

$$ X \in \mathbb{R}^{3 \times 4} $$$$ w \in \mathbb{R}^{4 \times 1} $$Therefore:

$$ Xw \in \mathbb{R}^{3 \times 1} $$The feature dimension (4) must match:

$$ (3 \times 4)(4 \times 1) = (3 \times 1) $$Neural-network view

A neural network uses the same idea, but repeats it many times.

One neuron

A single neuron receives an input vector:

$$ x = \begin{bmatrix} x_1 \\ x_2 \\ \vdots \\ x_d \end{bmatrix} $$It has a weight vector:

$$ w = \begin{bmatrix} w_1 \\ w_2 \\ \vdots \\ w_d \end{bmatrix} $$It computes:

$$ z = w^\top x + b $$Expanded:

$$ z = w_1x_1+w_2x_2+\cdots+w_dx_d+b $$Then it applies an activation function:

$$ a = \sigma(z) $$For example, if \(\sigma\) is ReLU:

$$ a = \max(0,z) $$So a neuron is a weighted sum followed by a nonlinear function.

One layer

A layer contains many neurons.

Suppose the input has \(d=4\) features and the layer has \(h=3\) neurons.

The input is:

$$ x \in \mathbb{R}^{4} $$The weight matrix is:

$$ W \in \mathbb{R}^{4 \times 3} $$The bias vector is:

$$ b \in \mathbb{R}^{3} $$If \(x\) is written as a row vector, then the layer computes:

$$ z = xW + b $$The shape is:

$$ (1 \times 4)(4 \times 3) = 1 \times 3 $$So:

$$ z \in \mathbb{R}^{3} $$Each component of \(z\) is the pre-activation value of one neuron.

For example:

$$ z = \begin{bmatrix} z_1 & z_2 & z_3 \end{bmatrix} $$Then:

$$ a = \begin{bmatrix} \sigma(z_1) & \sigma(z_2) & \sigma(z_3) \end{bmatrix} $$Batch input

In deep learning, we usually process many examples at once.

Let:

$$ X \in \mathbb{R}^{n \times d} $$where:

- \(n\) is the batch size,

- \(d\) is the number of features.

Let:

$$ W \in \mathbb{R}^{d \times h} $$where:

- \(h\) is the number of neurons in the layer.

Then:

$$ Z = XW + b $$The shape is:

$$ (n \times d)(d \times h) = n \times h $$So:

$$ Z \in \mathbb{R}^{n \times h} $$For example, if:

$$ n=32,\qquad d=7,\qquad h=64 $$then:

$$ X \in \mathbb{R}^{32 \times 7} $$$$ W \in \mathbb{R}^{7 \times 64} $$and:

$$ Z = XW + b \in \mathbb{R}^{32 \times 64} $$This means:

- 32 examples are processed together,

- each example has 7 input features,

- the layer produces 64 hidden features for each example.

In code, this often appears as:

import torch

X = torch.randn(32, 7) # 32 houses, 7 features each

W = torch.randn(7, 64) # maps 7 input features to 64 hidden features

b = torch.randn(64) # one bias per hidden neuron

Z = X @ W + b

print(Z.shape)

Output:

torch.Size([32, 64])

This is the same mathematics:

$$ Z = XW + b $$The code and the mathematics are two views of the same operation.

Common mistakes

Mistake 1: Confusing a scalar with a vector

A scalar is one number:

$$ x = 9.0 $$A vector is an ordered collection of numbers:

$$ x = \begin{bmatrix} 1200 \\ 3 \\ 2 \\ 10 \end{bmatrix} $$Do not call every number a vector.

Mistake 2: Forgetting what the components mean

The vector:

$$ x = \begin{bmatrix} 1200 \\ 3 \\ 2 \\ 10 \end{bmatrix} $$is meaningful only if we know the feature order.

It may mean:

$$ [ \text{area}, \text{bedrooms}, \text{floors}, \text{age} ] $$Without feature names, the numbers lose their interpretation.

Mistake 3: Treating categorical labels as ordinary numbers

If:

$$ \text{North}=1,\qquad \text{South}=2,\qquad \text{East}=3 $$the model may interpret this as an artificial ordering.

One-hot encoding avoids this simple problem by representing categories as indicator vectors.

Mistake 4: Ignoring units and scales

A feature like area may be around 1200, while bedrooms may be around 3.

A model may be affected strongly by the feature with the larger numerical scale.

This is why standardization is often useful:

$$ z_j = \frac{x_j-\mu_j}{\sigma_j} $$Mistake 5: Thinking a 1-D array has row or column orientation

In mathematical notation:

$$ x^\top $$has a clear meaning.

But in NumPy, an array with shape:

(5,)

is neither explicitly a row vector nor explicitly a column vector.

For explicit orientation, use:

(1, 5)

or:

(5, 1)

Mistake 6: Multiplying incompatible shapes

The product:

$$ Xw $$is valid when:

$$ X \in \mathbb{R}^{n \times d} $$and:

$$ w \in \mathbb{R}^{d \times 1} $$because the inner dimensions match:

$$ (n \times d)(d \times 1) $$But this is not valid:

$$ (n \times d)(n \times 1) $$unless \(d=n\), and even then it would usually not mean the desired operation.

Section summary

We began with one number:

$$ x = 9.0 $$This is a scalar.

Then we described one house using many numbers:

$$ x = \begin{bmatrix} 1200 \\ 3 \\ 2 \\ 10 \\ 3.2 \end{bmatrix} $$This is a vector.

We learned that a row vector has shape:

$$ 1 \times d $$while a column vector has shape:

$$ d \times 1 $$We learned that transpose changes rows into columns:

$$ x_{\text{row}}^\top = x_{\text{col}} $$We then stacked many examples into a dataset matrix:

$$ X \in \mathbb{R}^{n \times d} $$where rows are examples and columns are features.

Finally, we saw the first machine-learning model:

$$ \hat{y} = x^\top w + b $$and its batch form:

$$ \hat{y} = Xw + b $$This same structure appears inside neural networks:

$$ Z = XW + b $$So the path from data to deep learning begins with a simple idea:

one object becomes numbers, numbers become a vector, vectors become matrices, and matrices become the language of machine learning.